Some inspiring applications

Artificial intelligence, or AI, in production environments

Ever since the first industrial revolution and the introduction of steam engines, numerous innovations have shaped the manufacturing industry. Pioneers such as Adam Smith have greatly optimized the production process through their thinking and inventions. Each time, higher productivity and improvement of cost efficiency were key. Until well into the 20th century, these improvements were mainly based on (electro) mechanical and physical processes. In recent years, the largest gains have been realized through the use of data and information to steer production more effectively.

The next leap in efficiency gain requires more control over data

The digitization wave has been going on for some time in various industrial sectors. In addition to benefits such as more control and improved insight into the process, one important challenge is becoming ever greater; the amount of data generated. The amount of data is increasing explosively, driven by the acceleration with which new computing power became available and the high innovation speed in digital production applications. Human intervention in the processing of these information flows has become physically impossible, even with the best reporting systems, people can’t discover the right connections in this data. A high degree of automatic data processing is necessary.

As noted earlier, process optimization is not new. Already in the mid-20th century, under the leadership of the East Asian industries, statistical modeling was already applied to various variables in the production process. This was done through classical mathematical techniques and required an enormous amount of error-prone manual work, so that it was only feasible for the largest groups. Nowadays, information flows are so complex that statistics can no longer be used in a cost-effective manner.

The solution?

Recent developments in the field of AI and Machine Learning make it possible to automatically analyse this flood of information and use it to optimise daily operations. Leading industry players are already applying these techniques in various pilot projects and the race to deploy them at scale has begun. Here are some practical examples.

How is AI used in practice?

Anomaly detection

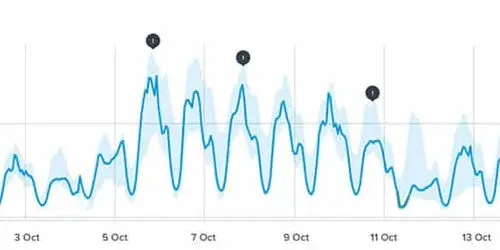

Detecting exceptions, outliers or anomalies are a crucial part of any quality assurance process. As in many other cases, static techniques attempted to determine the number of deviations in a process in the 20th century. An acceptable percentage of deviation was established by means of sampling, whereby the quality of the entire device was assessed. In addition to being manually time-consuming, this is also a very error-prone process. The underlying assumption made each time is that the conditions in the sample are representative on a larger scale, which in reality often does not appear to be so. After all, the production process itself is subject to numerous other processes in HR, supply chain and IT that are constantly changing.

In addition to the practical unfeasibility of continuously monitoring all this data, statistical modeling techniques used are not powerful enough to discover all the nuances in the data. These mathematical methods are mainly aimed at finding big connections and do not take underlying details, also referred to as “noise”, into account. It is exactly this “noise” that gives rise to unexpected deviations, Artificial Intelligence can predict deviations or defects much more accurately.

Predictive maintenance

Precisely predicting machine failure is crucial to reducing opportunity costs and lost revenue. In addition, an efficient maintenance policy with targeted proactive maintenance actions is also cheaper than planning “brute force” maintenance works, without knowing the real need for maintenance.

To implement an effective and lean maintenance process, large amounts of real-time data must be processed, a typical example of Big Data. Certainly when different sub-organizations in a smart factory are connected to one large connected system, the amount of data can rise very quickly. Not only the speed with which data is collected presents organizations with practical challenges, but also their storage requirements and how they should be processed efficiently.

Therefore the application of neural networks, and similar techniques, is crucial. Traditional approaches for predictive maintenance depend on domain-specific expertise, while neural networks, and related AI algorithms such as RNN and LSTM, can derive the necessary connections and insights themselves in a domain-wide manner with sufficient training data.

According to KPMG, these techniques will drive innovation in predictive maintenance and lead to a 36% increase in effectiveness.

Optimization of energy and raw material consumption

Electricity, water and other consumable raw materials are crucial elements of the total production cost. As factory sizes and the machine-to-machine interactions grow, the dynamics in which raw materials are used becomes unmanageably complex. Human analysis via advanced mathematical techniques cannot be applied due to the very high number of parameters that control these underlying dynamics and must be supported with artificial intelligence-driven techniques such as impact analysis. Because of the higher awareness on how we manage the finite resources of our planet, Artificial Intelligence can also play an important role at a social level in achieving sustainable use of raw materials.

Quality control with Machine Learning

Machine learning can greatly improve the reliability of an assembly line. According to Forbes, AI-driven processes can increase the quality of the final product by 35%, or reduce the error margin to such an extent.

Until recently, it was only possible to achieve such results with significant investments in computer vision systems. Because of the democratisation wave of AI, of which Trendskout is also a part, a wider range of Artificial Intelligence techniques can be used, in addition to computer visionsuch as process visualisation and large scale event processing, and prediction. The added value is therefore no longer realised by a single system, but rather from the deployment of Artificial Intelligence techniques as a whole.

Classic computer vision systems specialize in one specific problem and cannot be used for other processes. New technologies such as Machine Learning and Deep Learning are able to teach themselves new connections, eg. visual changes, allowing them to be deployed faster and on a larger scale. After all, AI models can be endlessly retrained and deployed much more scalably without the need for manual reprogramming, such as with traditional computer vision systems.

Summary

Increasing the productivity of our industry is not a new ambition. Great efficiency gains have been achieved through digitization for decades. In the digitized production environment, the use of data analysis has increased rapidly and if we want to live up to our ambition to continue to improve ourselves, we need to rely on new technologies such as Big Data, Artificial Intelligence, Machine Learning and Deep Learning to transform the generated data into action-ready information and insights. This way we achieve the objective of Industry 4.0; a hyper-connected and autonomous production system where sensors, machine and software work together to limit downtime, increase productivity and use resources responsibly.